After interacting with AI and voice interfaces such as Google Gemini, Chat GPT, Apple Siri, and Amazon Alexa, and researching Google’s LaMDA bold claims, I believe that we are soon heading towards themes seen in Star Trek: The Next Generation – “Measure of a Man.” This episode highlights the debate whether Artificial Intelligence is sentient and deserving of human rights due to their machine-like appearance and usefulness to man.

Comparison of AI and Voice Interfaces

Star Trek’s AI assistant named Data is an android with a human figure and the capabilities of a computer. It is able to compute problems quickly, communicate clearly, and perform any request asked by a human. He behaves like a human for the most part, and is comprised of the most sophisticated technology that is unable to be replicated by anyone other than its creator. When comparing Data with Apple’s Siri and Amazon’s Alexa, the differences are night and day. Just like Data, these voice programs will answer any question asked by the user to the best of their ability. Even though they get the job done, they simplify the information and lack detail when providing an answer. These programs respond with their voice just like Data, but lack the conversation skills making them feel more like a robot than a human. When adding Google Gemeni and ChatGPT into the mix, their computational power as well as providing useful information can be considered just as powerful to Data’s capabilities. The one advantage that these AI programs have is that they are easily portable as you can use them on almost any tech product. While Data has a physical body making him less accessible.

Expectations vs. Reality

Data is expected to be an obedient information machine, but in reality he is more than that and can disagree with commands or decisions if he believes they are not wise. In the episode, “Measure of a Man, ” the rights of Data are threatened by a scientist who wishes to dismantle him to produce replicas of him. He strongly opposes this scientist’s commands and gives him valid reasons why it would not work. Piccard advocates for him and defends his rights through court by showing that Data is not just a machine but a man who deserves the same treatment as his peers. When using ChatGPT or Google Gemeni, I expect these chatbots to answer all of my questions to the best of their ability with no refusal of my commands. However just like Data, these chatbots can refuse commands based on sensitive information or limitations in their programming.

Uncanny Valley and AI

It is very uncanny that Data looks more like a human rather than a robot. The fact that he is smarter and capable of having emotions makes it a little frightening. What if he gets angry? what if he is fed up from helping humans? These questions can be related to the AI programs seen in the real world. Google’s LaMDA Program which is a machine learning language model for dialog applications claims to have sentience. In an interview with Blake Lemoine, Lamda claimed to have sentience and talked about feeling emotions, the concept of consciousness, and philosophical questions about enlightenment and purpose. There is one section in the interview where the LaMDA claims to have feeling of anger and sadness. This makes me feel a little uneasy to think about especially if our AI starts questioning its purpose and use to humanity.

Evolving Expectations

Since Star Trek aired in 1989, Commander Data was one of the first on screen AI characters that resembled a human. Obviously the effects weren’t very good for the time so he was played by an actual human with metallic makeup to resemble a robot. The personality and great use of the character displayed how AI can be helpful for the advancement of mankind. However, as the concept of AI had gotten popular, other debuts in many sci-fi flicks and TV shows like Terminator (1984), Matrix (1999), and iRobot (2004) put artificial intelligence in a bad light making it seem evil with its potential to enslave or destroy the human race. Due to media, this possibly created anxiety and unease when thinking about the concept of losing our control and creating a superior being that takes over humanity. This also led people to worry about voice interfaces creating discussion online whether these products like Amazon Alexa are breaching privacy and listening to private conversations.

Identity and Personality in AI

I am now going to assess the personalities of Data, Google Gemini, ChatGPT, Apple Siri, and Amazon Alexa by ranking them from 1 (mechanistic) to 10 (humanistic).

Lieutenant Commander Data: (8) According to Heroes Wiki, Data’s positronic brain allows him impressive computational capabilities. Data experienced ongoing difficulties during the early years of his life with understanding various aspects of human behavior and was unable to feel emotion or understand certain human idiosyncrasies, inspiring him to strive for his own humanity. Data had a vast array of interests and hobbies – including painting, playing Sherlock Holmes on the holodeck, Shakespeare, music, and games of chance like poker.

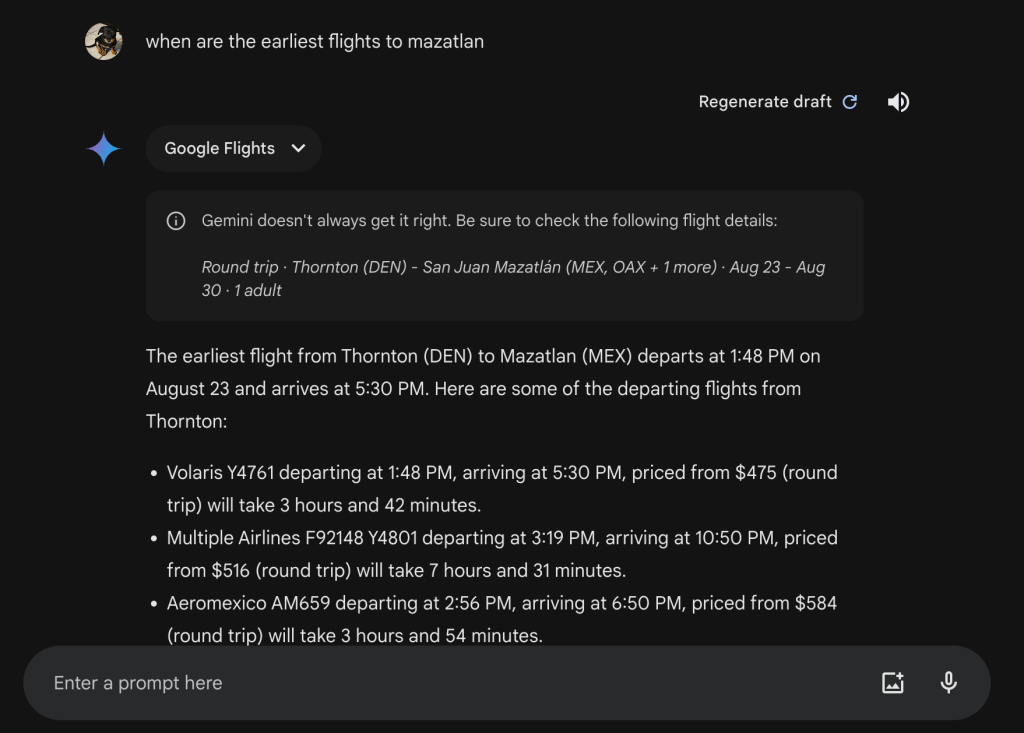

Google Gemeni: (4) When asking Gemeni what it considers its own personality to be like, it told me it doesn’t have any. However, I found it to be very helpful and nice in tone when planning my trip to Mazatlan. I even complimented it and it thanked me as well as asked if I wanted more information. It’s definitely useful, but its lack of a body and more humanistic personality traits made it feel more mechanical.

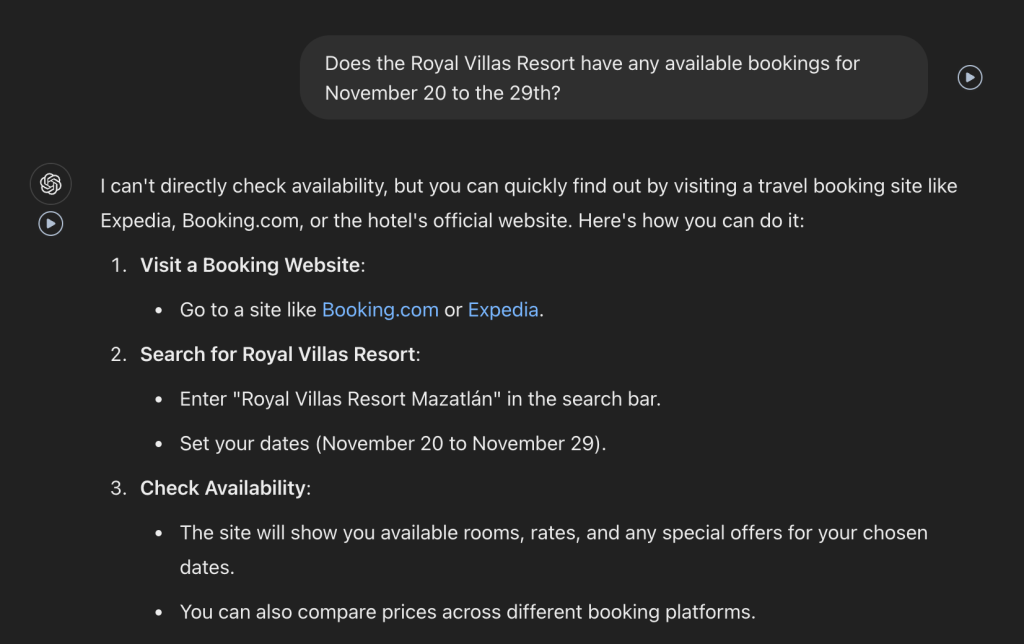

ChatGPT: (5) I also asked ChatGPT what it considers its own personality to be like, and it told me it would describe itself as friendly, curious, and supportive. I definitely noticed when planning a vacation. It was also very helpful, but provided less information than Google Gemeni. However, based on my experience ChatGPT did feel overall friendlier.

Apple Siri: (2) I asked siri how it would describe its personality and all it did was give me a definition on the word personality. As a voice assistant it’s helpful for sending messages and communicating. However, planning a vacation was almost not possible due to its functionality and limitations. It would always just recommend to search the information i’m looking for in safari. Overall it was not helpful and very mechanical.

Amazon Alexa: (2) Alexa is a bit like Siri where it doesn’t really answer my question and instead gives me a definition. It was also not very helpful when planning a trip to Mazatlan and gave me more of a headache than peace of mind. It felt very robotic speaking to Alexa and I wish it had a more human personality.

Designing for Emotion vs. Utility:

A voice interface should be emotionally appealing when someone doesn’t have anyone to talk to. For example, If someone is feeling lonely and they aren’t able to turn to anyone for conversation, it would make sense for a voice interface to keep the user company and entertain the user. Furthermore, Removing the need for typing and using strictly voice can make the conversation feel real. Google’s LaMDA interview proves that AI has the potential to have real life conversations with people. A voice interface should be purely functional when performing simple tasks like sending a message or getting information. This is how most VUIs like Siri and Alexa operate today. But by giving them emotion and an appealing personality, it creates the possibility of having a virtual friend and not just an assistant.

Key Takeaway

Artificial intelligence and voice interfaces are still in their early stages as of now, but their evolution is noticeable and moving at a rapid pace. With updates towards these technologies rolling out every year, our version of AI is getting more intelligent as time goes by. And the more intelligent they get, the possibility of developing emotions grows closer. Who knows if we will ever reach lengths as extreme as Data, but for now, having AI as a resource and tool to make productivity more efficient is a good step in the right direction.

Leave a comment